I’ve written a few posts about mind-uploading, focusing mainly on its risks and philosophical problems. In each of these posts I’ve drawn distinctions between different varieties of “uploading” and suggested that some are less prone to risks and problems than others. So far, the distinctions I’ve drawn have been of my own choosing, based on what I’ve read about the topic over the years. But in his article “A Framework for Approaches to Transfer of a Mind’s Substrate”, Sim Bamford offers an alternative, slightly more sophisticated, framework for thinking about these issues. I want to share that framework in this post.

Bamford looks like a pretty interesting guy. His research is in the area of neural engineering. Consequently, he brings a much-needed practical orientation to this debate. Indeed, in the article in question, in addition to developing his framework, he offers glimpses into his own work on neural prosthetics, and freely admits to the limitations of the current technology. Needless to say, despite all this much-needed practicality, I’m still primarily interested in the more philosophical musings and that’s where the focus lies in this post.

In what follows we are going to do three things. First, we’ll offer a general characterisation of mind-uploading (or, as Bamford rightly prefers, “mind substrate transfer” or MST) and consider three different methods of MST that are advocated at the moment. Second, we’ll try to organise these different methods into a simple two-by-two matrix. And then third, we’ll see whether there are any other approaches to uploading that could occupy the unused space in this matrix.

1. Three Approaches to Mind-Uploading

MST is the process whereby “you” (or whatever it is that your identity consists in) are transferred from your current, biological substrate to an alternative, technological substrate. MST is (for now) purest science fiction. It’s important not to forget that at the outset. Nevertheless, there are various advocates and some of these advocates attach themselves to particular methods of MST. In each case, they believe that this method, pending further research and technological development, could allow us to preserve identity across different substrates.

Three methods seem particularly prominent in the current conversation. The first is what might be called the gradual replacement by parts method. The idea here is that the human brain (in which your identity is currently “housed”) could be replaced by neuroprosthetics. This already being done to some extent, with things like cochlear implants and artificial limbs replacing the input and output channels of the nervous system. Bamford, being an expert in this area, also details some recent examples of “closed loop” prosthetics, which act as both input and output. The idea of the brain being gradually replaced prosthetic is not completely outlandish, but it would require significant advances in current technology.

The second method of MST can be called the reconstruction via scan-method. This is probably the method that has most captured public attention. The idea is that one creates a “copy” or model of the brain and then locates this copy in another medium. There are choices to be made about how fine-grained the copy really is (and this feeds into the discussion below). One proposal, discussed by Bostrom and Sandberg, is to emulate the brain right down to the level of individual neurons and synapses (“whole brain emulation”).

The third method of MST can be called the reconstruction from behaviour-method. It tries to capture information at the behavioural-personal level and then use this information to recreate the person in another medium. This is obviously a much more abstract version of uploading, perhaps best not called uploading at all. It takes behavioural characteristics, traits and publicly disclosed thoughts — not brain states — to be the true essence of identity. It might seem a little bit odd — it certainly does to me — but it does have its fans. For example, Martine Rothblatt’s Terasem Foundation has an ongoing project researching this method.

Before we structure and order these methods, I want to pause to highlight an interesting test that Bamford proposes for determining their plausibility. To be fair, Bamford proposes this somewhat in passing, and doesn’t single it out and label it like I am doing, but I think it’s worth flagging it for special attention:

Bamford’s Test: Suppose that each of the above methods succeeds in producing a synthetic version of a particular person (A and synth-A). Suppose further that, when asked, synth-A insists that it shares an identity with A; that it simply is A. Then ask yourself: is there anything about the procedure that led to the creation of synth-A that makes their insistence any more plausible than the claim of someone alive today to be the reincarnation of Florence Nightingale?

Obviously this is intended somewhat in jest, and would need to be fleshed out in more detail before it became a useful test of plausibility. But it makes a serious point. Bamford claims that “identity” is a largely fictional construction of persons and societies, and so what really matters in this debate is whether the synthetic copy is socially accepted as having the same identity. Clearly the person claiming to be Florence Nightingale would not be. Are the proposed uploading methods enough to make a difference? My own feeling is that I’d be more inclined to accept the claim of synth-A if he or she had passed through the gradual replacement by parts method than any of the others. I say this partly because that method allows for constant checking of identity preservation. But that’s just my feeling. I’d be curious to hear what others think.

2. The Proposed Framework

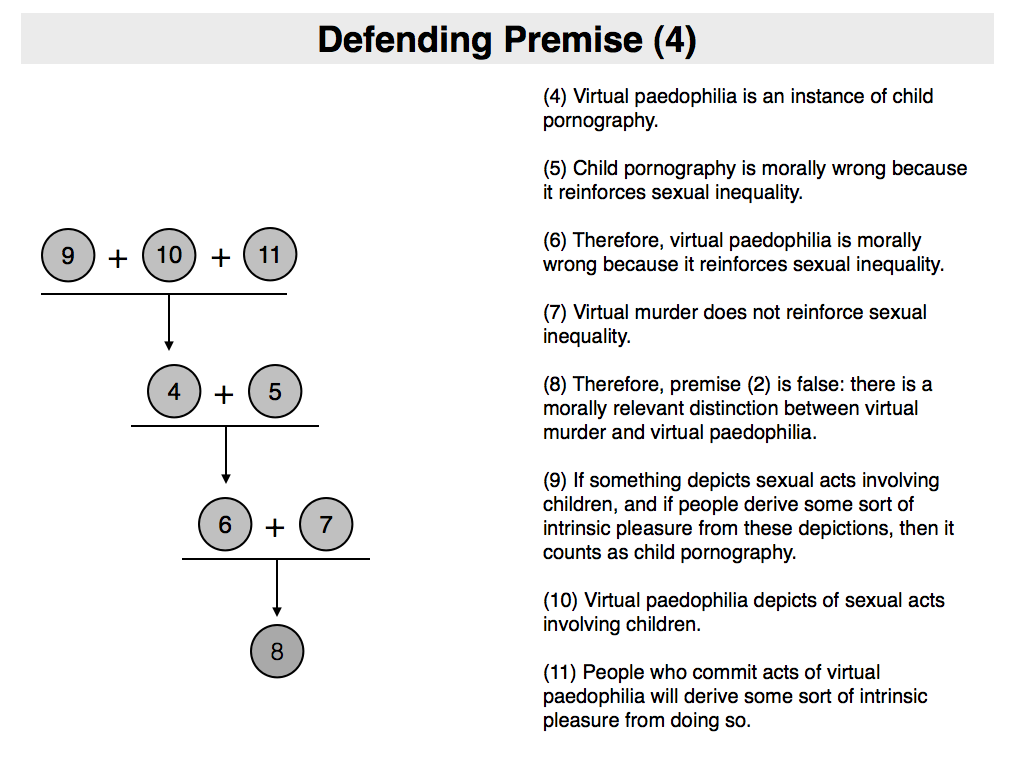

Bamford’s suggestion is that the various methods of uploading can be categorised along two dimensions (or, for simplicity’s sake, in a two-by-two matrix). The first of those dimensions differentiates between “on-line” and “off-line” versions of uploading; the second between “bottom-up” and “top-down” versions. Let’s unpack these terms in a little more detail.

The on-line/off-line distinction refers to the nature of the connection between the original and synthetic versions of the mind. In the off-line case, information sufficient for creating the synthetic version is gathered first, and then the synthetic version is assembled. The two versions can run side-by-side, but there is no causal nexus between them such that they work together to implement the same individual (for at least some period of time). By way of contrast, in the on-line case there is a causal nexus between the two versions and they do operate in parallel to implement the same individual. It is easy to see that the two reconstruction methods of MST fall inside the “off-line” bracket, whereas the gradual replacement by parts method falls inside the “on-line” bracket.

The bottom-up/top-down distinction refers to the type of information that is being captured and copied by the procedure. The top-down method tries to capture the highest level of information about the person. The bottom-up method tries to capture the lowest level of information necessary for making a reliable simulation. Clearly, the reconstruction from behaviour method would count as being top-down, since the focus there is at the behavioural level. Contrariwise, the gradual replacement of parts method and the reconstruction via scan method, are bottom-up. They focus on lower, neurological levels of information.

That gives us the following two-by-two matrix, with the three methods categorised accordingly.

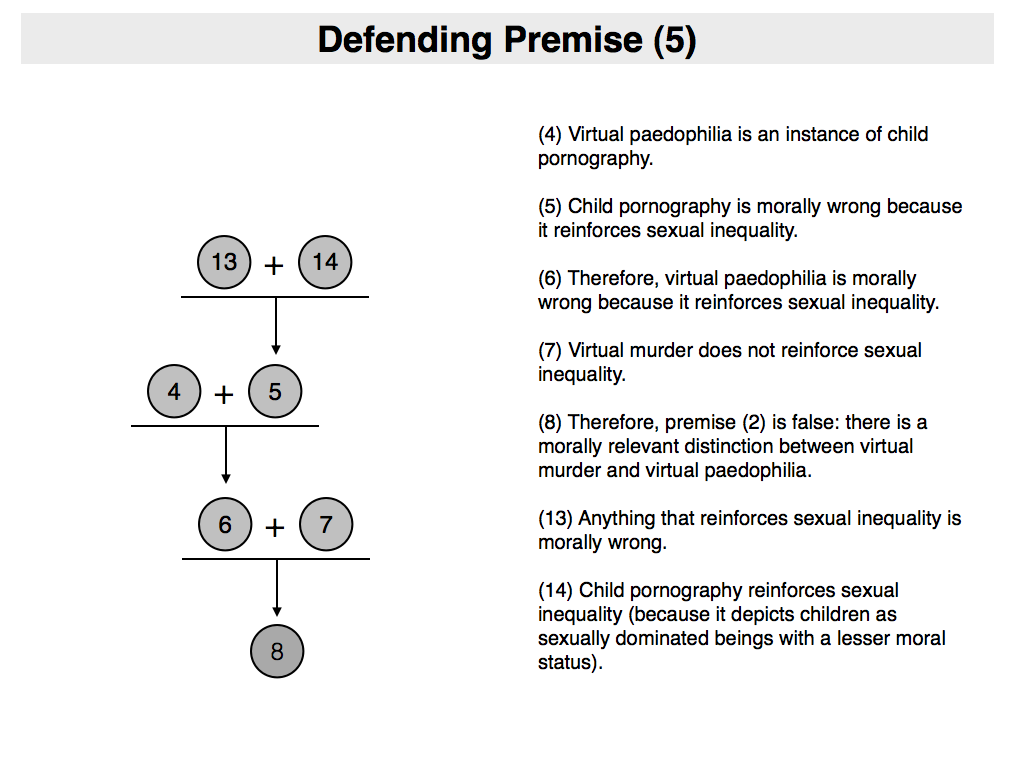

3. Is there a fourth method?

The most striking fact about this proposed framework is that one of the grids is empty. This raises the obvious question: is there an (as yet underexplored) fourth method for MST? Bamford suggests that there might be. The approach would involve the use of a synthetic robot partner (almost like a symbiont).

The idea is roughly this: there needs to be some generic human-like substrate (the robot), with the same sensory and motor capacities as a regular human being, and with a control system modeled closely on the human brain. This system, however, needs to be “blank” (or as “blank” as is possible). The generic robot would be paired with a human being. It would learn from that human being, observing its behaviour, slowly acquiring the same action patterns and cognitive routines, until eventually it forms a complete “copy”. In this way it would exhibit the properties needed for a top-down method of transfer.

But how would it be on-line? For this, there needs to be some causal nexus between the robot and the original that allows them to work in parallel to implement the same identity. Bamford imagines a couple of mechanisms that might do the trick. The first would be to link the reward systems of the robot and the human. The feeling being that this would constrain them to work toward common ends (presumably the original human’s, since they are more cognitively enriched when the process begins). The second mechanism would be to force the human and robot bodies to overlap in some way. This could be achieved if the robot was initially like an exoskeleton over the human body, or if the robot’s control system was connected to sensors and actuators implanted in the human.

As Bamford notes, this method is highly speculative, and no one seems to have proposed it yet. Nevertheless, if we are willing to accept the other possibilities within the matrix (and that’s a big “if”), this does look like another possibility that might be worth exploring.